Teaming AI with Human Experts Improves Bridge Inspection Accuracy

CMU Group Uses Bridges-2 to Create Video Game for AI to Learn From, Teach Human Engineers

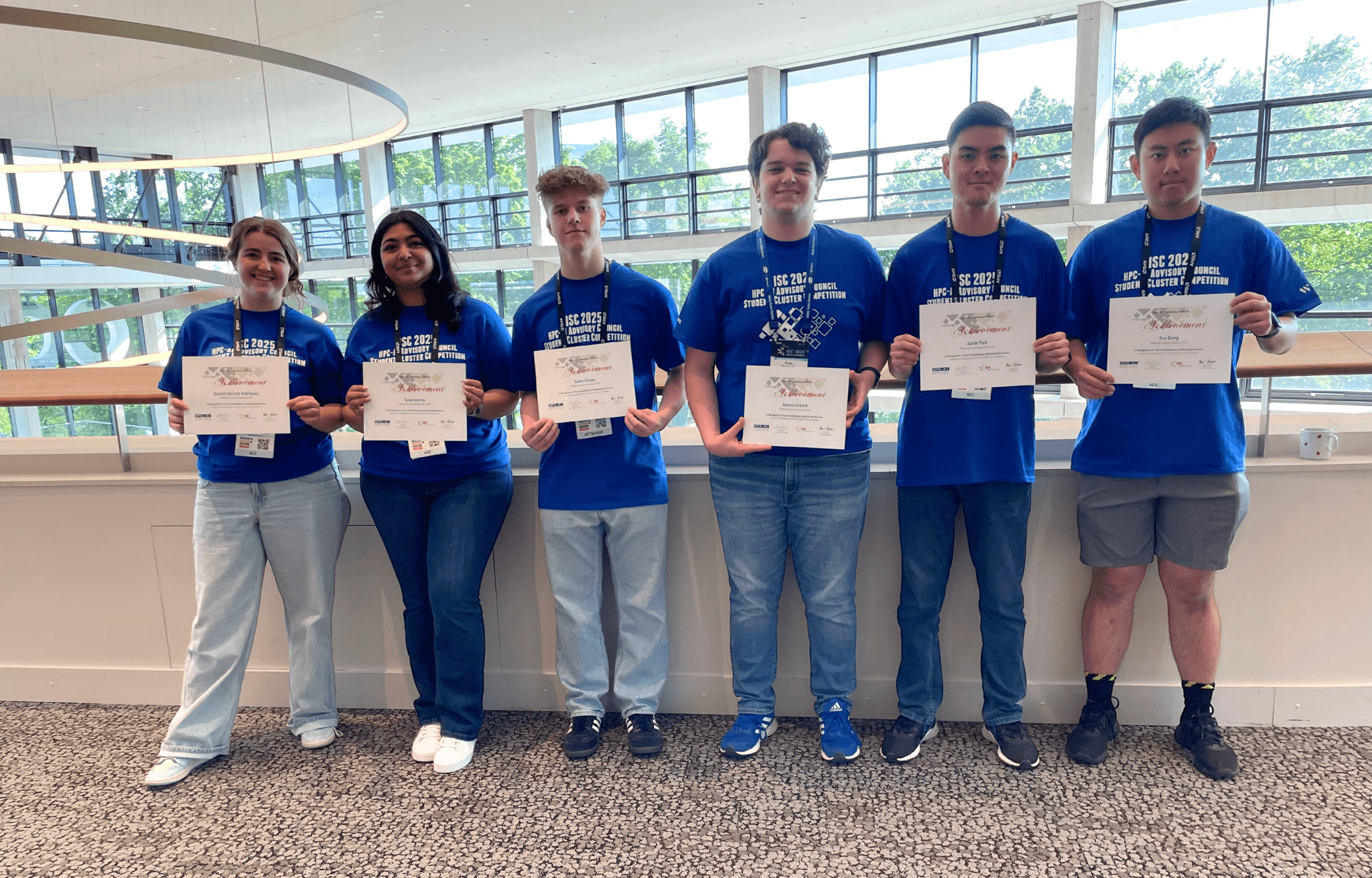

High School Students Study Nanotechnology, AI Heart Disease Detection Using Bridges-2

Unique Program at North Carolina School of Science and Mathematics Introduces Students to High Performance Computing

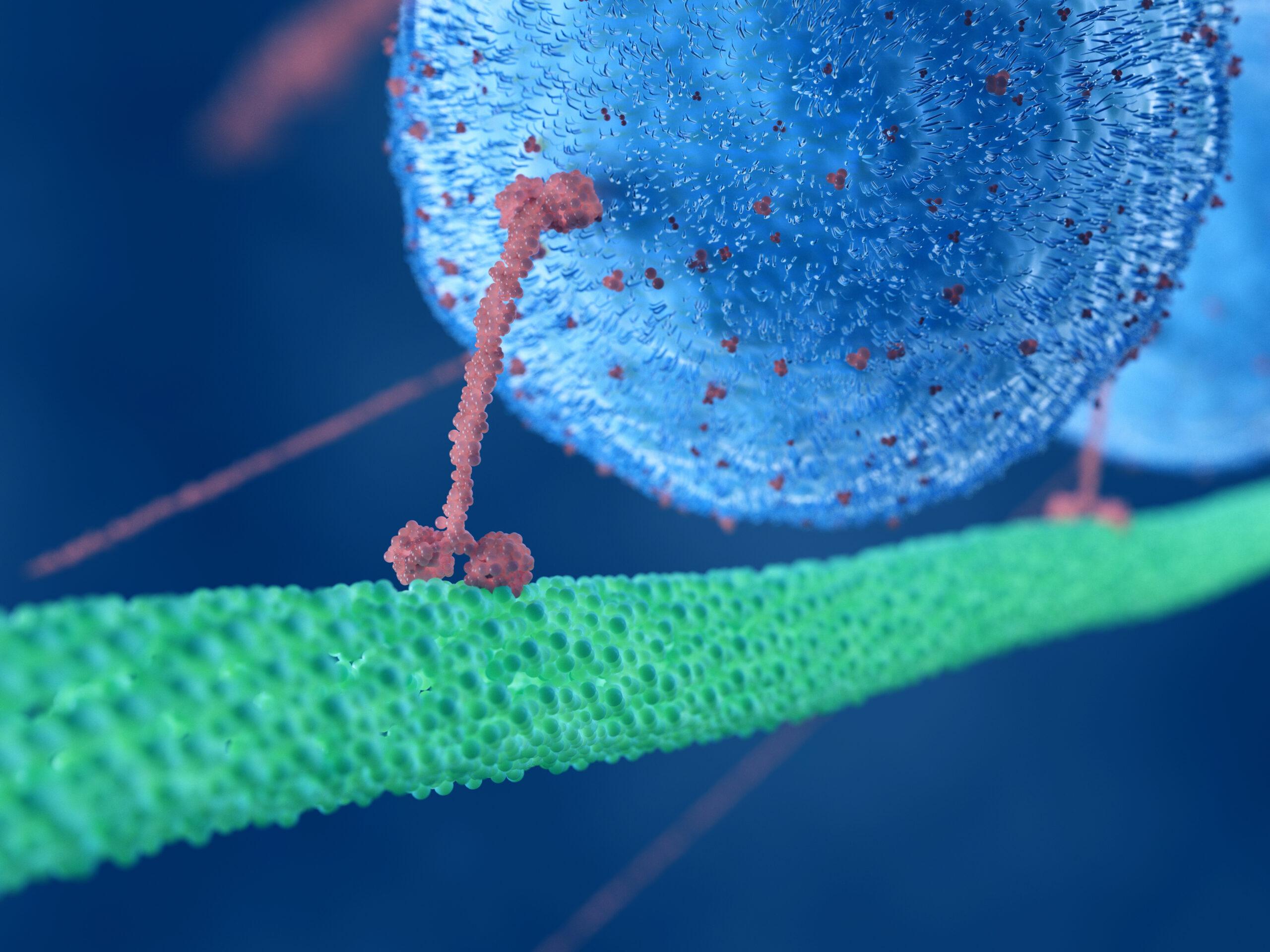

Corrected Numbers Allow Accurate Simulation of Oxygen Kicking Carbon Monoxide out of Hemoglobin

AI-Derived Parameters and Simulations on PSC’s Flagship Bridges-2 Offer Therapeutic Target for Carbon Monoxide Poisoning

Accelerate your research on Bridges-2,

our flagship supercomputer

Our featured projects

PSC maintains advanced infrastructure to support

computation-heavy research in areas such as: data analytics, machine learning, bimolecular simulation, AI and deep learning, and provides access to the national cyberinfrastructure community of resources.

Want to help further our research?

Support the next big discovery or inspire the next class of great thinkers with a gift to our center.

Recent News from PSC

Teaming AI with Human Experts Improves Bridge Inspection Accuracy

CMU Group Uses Bridges-2 to Create Video Game for AI to Learn From, Teach Human Engineers

High School Students Study Nanotechnology, AI Heart Disease Detection Using Bridges-2

Unique Program at North Carolina School of Science and Mathematics Introduces Students to High Performance Computing

Corrected Numbers Allow Accurate Simulation of Oxygen Kicking Carbon Monoxide out of Hemoglobin

AI-Derived Parameters and Simulations on PSC’s Flagship Bridges-2 Offer Therapeutic Target for Carbon Monoxide Poisoning

Accelerate your research on

Bridges-2, our newest supercomputer

Our Featured Projects

PSC maintains advanced infrastructure to support computation-heavy research in areas in: data analytics, machine learning, bimolecular simulation, AI and deep learning, and provides access to the national cyberinfrastructure community of resources.

Want to help further our research?

Support the next big discovery or inspire the next class of great thinkers with a gift to our center.